A mechanism for subjective downward-causation

This article provides a fairly detailed explication of the theoretical science behind downward-causation.

Abstract

In this paper I put forward a theoretical mechanism for apparent causation sourced at the level of the mind, or more specifically the observer. I will discuss theoretical physicist Michael Lockwood's "Many Minds Interpretation" of quantum physics, and elaborate upon it in light of recent advances in our understanding of the nature of consciousness, in particular empirically-testable theories of consciousness based on algorithmic information theory and data compression. I will first explain how recent experimental discoveries in quantum physics have made Lockwood's Many Minds Interpretation more plausible. Critically, I will then show how combining these theories can allow for downward-causation at a subjective level, whereby events appear to be caused not simply by conventional efficient causes (what we also call universal or micro-causes), but primarily by a particular state of mind, or informational state of the observer.

I will then discuss the consequences of the theory: how it predicts the likelihood of unconscious automaton in the form of "probabilistic zombies", subjective immortality, and how such a theory may provide an explanation of certain synchronicities or coincidences that we experience in everyday life, elaborating on the work by Carl Jung, and in general discussing how it provides a more straightforward and more plausible explanation for the immense complexity of the world around us. Lastly I will discuss what would be entailed in creating an experiment to test this theory.

The State of Quantum Physics

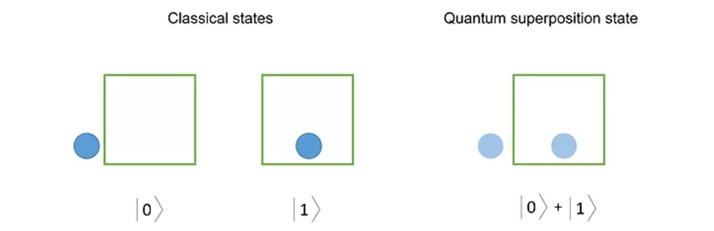

Quantum physics, the science of understanding the nature of the most fundamental elements of the world around us, has been in a state of flux ever since the shock of its discovery. Experiments that show interference patterns from an individual particle repeatedly appear to show the existence of a vast number of "shadows" of each and every individual particle - what has been called a particle's wave function. It describes that what we considered to be a single particle actually exists in a vast number of positions and states simultaneously, in what is called a superposition.

How many states? From a mathematical perspective, the wave function - meaning the size of the particle's possible positions - is infinite - meaning that there is an infinitesimally small probability that an electron could be found infinitely far away from its previous position. This, even theoretically, would mean that the particle can move faster than the speed of light, which would mean that Einstein's Special Theory of Relativity is only true typically, and not an absolute rule.

This discovery of Quantum Physics has fundamentally changed our view of reality. Testament to this is the fact that the pioneering physicists who discovered quantum mechanics, such as Heisenberg, Schrodinger, Bohr, Wigner and Pauli, all became spiritual "converts" and went on to show significant interest in mysticism.

So what are we to make of this? According to David Deutsch (Deutsch 1997), the very existence of interference patterns in quantum experiments is proof that there must exist superposed particles, otherwise what exactly would be interfering? If they don't actually exist then they surely would not be able to interfere with themselves. Many leading theoretical physicists attribute the superiority of quantum computing to the fact that the interference of these vast superposed particles can be utilized to perform massive computations in parallel. The reality, as Quantum Computing pioneer (and D-Wave CEO) Geordie Rose has pointed out, is that what we are seeing is not simply a vast number of virtual clones of a single particle, but rather we are confronted with real parallel universes. The multiverse. And quantum computers utilize the parallel processing throughout these universes to gain massive superiority in computing power.

Yet that isn't the whole story because it appears that all-but-one of these superposed particles disappear as soon as they are in any way measured, or, rather, as soon as they come in contact with a different particle. What exactly is happening at this point has been a bone of contention for over a century.

For many years the theory that superposed particles must "collapse" (reduce) into a single particle upon measurement was taught as the standard interpretation of quantum physics, known as the Copenhagen Interpretation. The first problem, however, is that it explained very little. While we do measure only one particle, all that appears to happen is that the alternative, superposed particles stop interfering as soon as one of the particles becomes entangled (involved) with another - so long as that entangled particle becomes entangled with yet another, and so on, all the way to the photon hitting the retina in our eye, or maybe hitting the brain process that formed the conscious moment of observing it. They stop interfering, but we do not know that they disappear. They simply become undetectable to all the particles entangled with it.

And while the Copenhagen Interpretation tells us that the alternative particles disappear from existence at the moment of entanglement, it provides no reason why they disappear, or why one particular value gets chosen over another - seemingly at random. In fact it was this very appearance of randomness that caused Einstein to famously declare in frustration with quantum physics: "God does not play dice!". It appeared to contradict the very casual determinism that he trusted to explain reality.

The second problem is that the hope that these superpositions "collapse" into a single particle under various conditions has faded as experiment after experiment has shown that the superpositions persist at larger and larger scales - perhaps even to the scale of an individual creature.

So if we know these superposed "shadow" particles exist and there is no collapse, where does that leave us? A far more reasonable explanation was put forth in 1957 by physicist Hugh Everett, which he named the Relative State theory, but which was later coined the Many Worlds Interpretation of quantum physics, by Bryce Dewitt in the 1970s. And it wasn't so much a new theory - it was actually just a simplification of what was already known. He asked: what if there is no collapse at all, and if each possible interaction of every possible superposition of every particle actually goes on existing? What if all that happens is that interaction (or, really, entanglement) between one possible particle value and another particle just excludes further interference? (A process that is known as decoherence).

This simple statement had massive ramifications. If that happened then it would mean these lines of possible interactions would each effectively become an entire, separate timeline of a universe. A whole world, in a vast (maybe infinite) number of possible worlds - all of which exist in parallel, totally hidden from one another.

In other words, when we see these "shadow" particles, what we're actually peeking into are "parallel universes", identical to our own except for a slight variation in the position (or other characteristic) of a single particle. As soon as that particle interacts with another particle, it creates yet another parallel universe entirely hidden and protected from the others. Effectively, this is the leading interpretation of quantum theory today.

Acceptance of the idea of parallel universes has certainly entered mainstream physics, if not mainstream culture. It appears not only in explanation of quantum phenomenon, but also when we peer far out into the universe: cosmologists believe that inflation theory, from which many predictions have been proven true, to also require these parallel universes (Siegel 2019). To quote Stephen Hawking (Hawking 2001):

There must be a history of the universe in which Belize won every gold medal at the Olympic games, though maybe the probability is low. This idea that the universe has multiple histories may sound like science fiction, but it is now accepted as science fact (by cosmologists, at least).

An informal poll of 72 of the world's leading cosmologists and quantum theories showed that the vast majority of them were firm believers in the Many Worlds theory, including Stephen Hawking and Richard Feynman. Today that support is even greater.

Competing theories were largely depending upon the existence of what was dubbed "hidden variables" - states within particles that we simply hadn't detected yet but that would tell us how it is likely to "collapse". Yet these have now been disproven in many ways, most recently in the proof of the violation of Bell's inequality theorem - the experiment by Alain Aspect et al in 2022 that won them the Nobel prize for physics. The Many Worlds Interpretation seems more and more likely.

The State of Mind

But a true understanding of the Many Worlds Interpretation shouldn't lead us to think that superposition is limited only to individual particles. Everything around us consists of particles moving about. The vast array of possibilities that can occur through the minute variations in the universal wave function, and the process of entanglement that isolates them, allow for entirely disparate and wildly different timelines to occur in parallel, each with large scale differences.

So it must be recognized, then, that you and I are also made of particles. And that means that we must, also, exist in this state of superposition and that there are therefore many of "me" at any one time. This is an unavoidable conclusion if the Many Worlds Interpretation is true, yet it is immensely profound.

And these copies of me are "branching out", forking into different timelines, each independently, and each experiencing potentially different turns of events. Quantum physics, in fact, allows for quite "magical" events to occur. The size of this wave function, the "cloud" we call a particle, is immense, perhaps even infinite, and this means that a particle has a probability (even though it's small) of seemingly bizarre behavior, for example suddenly appearing 2 foot to the left. An even smaller probability exists that your entire arm would appear 2 feet to the left. Yet being so improbable we do not typically witness these - even though they all occur. How is this so? David Deutsch, the theoretical physicist who invented the quantum computer, believes it is because there are many more of these branches where we necessarily experience only that which makes rational sense. To quote (Deutsch 2019):

If I boil some water in a kettle and make tea, I am in a history in which I switched on the kettle and the water became gradually hotter because of the energy being poured into it by the kettle, causing bubbles to form and so on, and eventually hot tea forms. That is a history because one can give explanations and make predictions about... In some tiny sliver of it, the kettle transforms itself into a top hat, and the water into a rabbit which then hops away, and I get neither tea nor coffee but am very surprised. That is a history too, after that transformation. But there is no [classical] way of correctly explaining what was happening during it, or predicting the probabilities

Our classical way of viewing just one timeline or history at once in some way dictates which of those timelines we experience. But how does this really work?

We know, empirically, that consciousness - our experience of existing - emerges from specific configurations of neuronal activity in the brain. Various forms of brain damage have affected consciousness in ways that make the correlation indisputable, albeit not fully understood. Damage to the thalamus, for example, always causes unconsciousness. So if there is indeed a hard link between neural configurations and consciousness then this means, in particular, that the processes within our brain that give rise to a conscious experience of "me" must also exist in different variations at any one time, in superposition. Thus we must be having different and independent conscious experiences in parallel all the time.

In fact, recent research has showed that our brain is always teetering on the brink of chaos (Science Daily 2009). So what is it that maintains that fragile and temporary order? Consistent with such quantum variations, it appears to suggest that there may be many timelines where the brain does exist in a state of chaos, a chaos that would not allow for consciousness to exist. Yet within that chaos there occasionally emerges connections that are fruitful, that form a conscious moment, and those are the ones that we experience. A kind of neural "fine tuning".

What defines a conscious moment phenomenologically? This is a vast question, and one that we have yet to fully grasp. At its base it is awareness, but not awareness of sense data (eg. smell, sight or sound) because there are many instances of sense data that we ignore and are clearly not conscious of. Rather, it is something higher-level that constitutes consciousness: more akin to the process of learning from sense data: when specifically filtered sense data is applied to update existing neural structures in order to learn something important to our own survival. That is the extent, and thus the limit, of what constitutes a conscious moment, as we shall discuss in some detail.

I emphasize the word "limit" because this is how we can delineate the boundaries of a conscious experience from a neural perspective: only those neural networks within our brain that cause that limited conscious moment constitute the unique and isolated experience of "me" at any moment. And from what we now know about the Many Worlds Interpretation, there must be many of those such isolated moments that are exactly the same, in parallel, at any one time. And, according to the Law of Identity, those exactly the same conscious moment configurations must be the same person.

But, of course, there must not only be multiple such conscious moments in parallel - but each moment of consciousness must be connecting with multiple entire, distinct parallel timelines, timelines of entire worlds where entirely disparate events may have unfolded. Events that we simply do not know about and thus are not part of the neural configuration that constitutes our conscious moment.

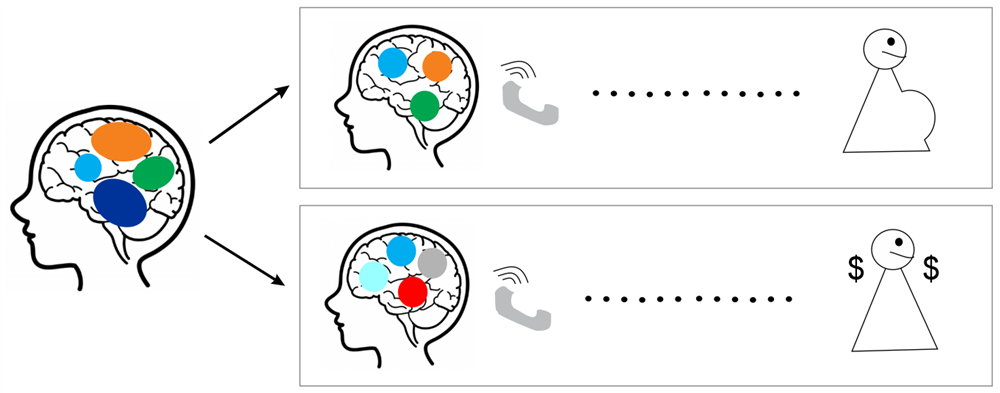

Let's look at an example. Imagine a timeline where the same conscious processing of a person sitting in a chair decides to call a friend they haven't spoken to for many years. In one timeline they find out they have won the lottery, and in another timeline is the same person calling the same friend only to find out they are having a new child. Those are examples of two entirely disparate events.

Both timelines of these events have been entirely hidden from the person and unfold in parallel, on the other side of the world. Both events constitute possible quantum-level divergence: six lottery numbers picked randomly from chaotic neural "noise", a chaos that certainly consists of variations at a quantum level, then applied to fill out a lottery ticket; or the chance happening of the fertilization of an egg - something so utterly complex it must require the perfect alignment of many processes that also vary considerably at a quantum level, meaning there will be timelines in which the fertilization happens with one particular sperm, another sperm or does not happen at all.

So there exists at least two timelines where this person has exactly the same prior state of mind (sitting quietly on a chair) with these two disparate, concurrent events occurring, entirely information-hidden from the person. And we must assume that these parallel persons with exactly the same state of mind must actually all be the same person. But that state of sameness then bifurcates from that point onwards: one moment of consciousness experiencing the lottery phone call, and another experiencing the news of the new child.

Prior to that phone call, we have to assume that there is (effectively) one of this person, experiencing one conscious moment because they do not yet know about the events on the other side of the world.

But the critical question then becomes: during the bifurcation that occurs in the phone call, would there then be two of this person, simply experiencing both events in two different timelines equally, totally unaware of the other's existence? Or do some branches, some possible outcomes, involve "more consciousness" than others? And if so, could it mean that I am more likely to find myself experiencing certain timelines than others based on how conscious I am in them?

Let's take a step back to understand this. This concept of being "more conscious" (or "more present") in certain eventualities (branches) than others is referred to as certain branches having a "greater measure" than others. It simply means that if you mapped out all of the possible timelines from the current moment, of which there could even be an infinite number, there would be a certain percentage where there is the "same you" being conscious (to varying degrees), and a certain percentage where it is either a different person (so different that it wouldn't be considered the same person), or you are not conscious at all. As theoretical physicist Michael Lockwood explains (LOT 2005):

According to the Everett interpretation, when an observable is measured, all possible outcomes occur in parallel. So, as we conventionally apply the concept of probability, the probability of any measurement outcome, regardless of the value of the coefficient associated with the corresponding eigenstate, can surely only be one! [Certain] How, then, are we to reconcile this implication of the Everett interpretation with the appearance, in quantum measurement, of probabilities?

In other words, the "greater measure" idea is a necessary requirement of the Many Worlds interpretation of quantum physics, simply in order to explain why we have such a thing as probabilities (that statistically certain events are more likely to occur, historically, than others). This is because, if every quantum-level possibility occurs, what does it mean to have certain outcomes be more probable than others? They should all occur with equal probability: 100%. However, statistically this is not what happens, and there does seem to be a probability of certain results occurring over others.

This is the point of departure for Michael Lockwood's theory of Many Minds.

His thesis is that it is indeed likely that we are more conscious in certain of these many-worlds timelines than others, by virtue of how the brain works. He explains in detail how the brain contains within it encapsulated components of "co-consciousness", each of which are like mind modules (MoM 2009) processing one particular aspect of sense data or concept that it creates. When these converge in a certain sense, we experience a unified consciousness: a moment of consciousness.

Certain brain configurations create more consciousness, and others less consciousness. We know this, for example, when we subjectively "skip over" periods of being unconscious - for example when we are in a deep sleep or under anesthesia. We also ignore certain phenomena that are less relevant to us, something that has been proven empirically.

He posited that out of all the possible parallel configurations of these components in our brain, we would be more likely to find ourselves in those superposed brain configurations where there is a greater "maximal phenomenal experience" - a term he uses that is broadly synonymous with actual consciousness. In other words, that those parallel neural configurations that entangle with certain, more relevant disparate (external) events could cause a greater experience of consciousness than others.

He goes on to say that there is a kind of "fifth dimension" - parallel to space and time - that measures the actuality of our consciousness of events passing, a corollary of the necessity to perceive space and time. To quote (LOT 2005):

It seems to me that the twosome of time and space should now be extended to a threesome of space, time, and what I shall call actuality. I here have in mind a formal parallel between the way we use the term ‘now’ and the way in which, in the context of the Everett [Many Worlds] interpretation, it seems natural to use the words ‘actual’ or ‘actually’. There is time, in the sense that there is such a phenomenon. But there is also the time— for example, 7.00 GMT. One is a dimension; the other is a point on this dimension that is subjectively salient— namely, the time that it is now. The actuality that I wish to add to space and time conforms to the same logic. In the context of the Everett interpretation of quantum mechanics, there is actuality in general, which encompasses all terms of a superposition. But once again, there is also what is subjectively salient. By that I mean what we ordinarily think of as actually happening— happening, that is to say, in this term of the superposition as opposed to the others.

In summary, he says that our brain must undergo a superposition of different configurations (which he refers to as combinations of neural components), simultaneously, to reflect its confrontation (quantum entanglement) with different events in each of the parallel timelines. These incur different levels of consciousness in perceiving those events (some we are more conscious of than others), and the measure of this consciousness is what he calls the actuality, which becomes a kind of dimension running directly alongside space and time.

I have illustrated this in figure 3. In this figure we show that, because some of these componentized brain states have stronger relationships, they create greater consciousness, quantified by the degree of that relationship. As Lockwood explains on p291:

..Think instead in terms of a relation of co-consciousness defined upon brain states and events, and allow this relation to be a matter of degree. Two brain states or events are co-conscious, we shall say, if and only if they figure constitutively within the same phenomenal perspective [moment of consciousness].

Returning, then, to the problem of probability, he explains how we only see those results that are ultimately compatible with greater conscious awareness:

According to this version of the Everett interpretation, the subjective probability of an outcome is directly proportional to the size of the region of actuality in which this outcome occurs.

He continues this discussion in MBQ on page 236:

In short, I see the preference of a particular basis [certain probabilities being observed] as being rooted in the nature of consciousness, rather than in the nature of the physical world in general. I do not pretend to know, in general, how and why the eigenstates of a particular set of compatible brain observables correspond to or figure within phenomenal perspectives, while others don't. Yet that does not seem to me to be any more of a mystery than... why only certain aspects of what goes on in the brain registers as consciousness anyway.

Since these ideas were proposed, much has changed in the study of consciousness. The aforementioned mystery of why certain brain configurations cause more consciousness and others cause less consciousness is the very thing we hope to illuminate in this paper when we come to discuss current theories and research into the nature of consciousness.

Lockwood expresses just how fundamental this proposal is to our understanding of reality. He continues (MBQ, p232):

I hardly need say that this is an exceedingly radical proposal. For my own part I would say only that this conception seems to be to be vastly superior to any other proposed interpretation of quantum mechanics, where interpretation is to be contrasted with modification. Consequently, it should, I content, be regarded as the preferred view, unless and until some evidence emerges that quantum mechanics itself breaks down at the macroscopic level, in such a way as to block the creation of macroscopic superpositions.

By "macroscopic" he means whether these strange quantum effects such as superposition still happen at scales larger than individual particles. It is a pressing question, yet it should be noted that we are now at a point where we have indeed demonstrated the existence of superpositions at a macroscopic scale. In the 1990s we succeeded in seeing interference patterns for molecules that contained 430 atoms. Later they did the same with molecules with 2,000 atoms (Fein 2019), then whole proteins were shown to be capable of being in superposition (Shayeghi 2020). More recently we've seen experiments trying to push this even further, maybe even to the size of creatures (Lee 2021). At some point we will likely prove that Schrodinger's cat was indeed dead and alive, in parallel universes.

So it seems that the idea of quantum collapse being related to the size of a system is a proverbial red herring. The more proof of macroscopic superpositions we find, the harder it will be to deny the Many Worlds and Many Minds interpretations of quantum physics.

And, as we said, if it is true then we must analyze this apparent mystery of what constitutes a degree of consciousness in the first place. This would indeed hold the key to how this dimension of "actuality", of our reality, unfolds, at least in terms of probabilities. And to understand this, we have to delve into the current theories of consciousness, many of which have made significant progress in this area in recent years in ways that are directly relevant to Lockwood's thesis.

Theories of Consciousness

The question of what consciousness actually consists of is called the "hard problem" for a reason. This explanatory gap between our scientific knowledge of how the brain functions and the phenomenal, subjective aspects of its function have become an impassable gulf. The phenomenal aspect of experience is something we live with and barely notice because it is so intrinsic to and entangled with existence itself, so under our noses that we simply don't notice it. So far many would say it isn't simply hard, it's down-right elusive.

However, that has not stopped us from analyzing what we can know of this consciousness phenomenon and its neural correlation. It must, after all, have a nature that can be studied. Several theories have arisen based on analyzing how the brain processes information, and how states we know to be unconscious change this processing. Yet what is common among these theories is that they are all based upon information theory.

In particular we will briefly look at Integrated Information Theory (Tononi 2014), a theory called Consciousness as Data Compression (Maguire 2005), and another theory that seeks to prove that consciousness is memory integration. All of these are related, and demonstrate the importance of information theory to understanding consciousness. But recent empirical studies that demonstrate human memory to work using a specific form of compression would lend considerable credibility to the latter two theories.

Integrated Information Theory

Over the past decade or so, the study of consciousness has become far more serious as a scientific discipline. This was led by Giulio Tononi who spearheaded the Integrated Information Theory of consciousness, also known as IIT (Tononi 2014).

IIT basically says that consciousness is fundamentally linked to integrated information processing in the brain. It formalizes a technique to actually measure the amount of consciousness by measuring the integrated information, referred to as Phi (Φ). The human brain, which we know to exhibit consciousness, has a very high degree of integrated information, so it has a high value for Phi. Other complex systems may have smaller, varying degrees of integration that would suggest they may also be conscious, just to a lesser degree.

The ramifications of IIT are quite radical. If it is true, then in theory we could build a "consciousness meter" that would tell us the degree that a system is conscious. This could be quite useful to determine whether patients in a vegetative state were conscious for example.

There are three characteristics that must be exhibited by a system that would have measurable Phi:

Information

What is information? Basically any data that has meaning is considered information. Books contain information, the Internet contains a lot of information. However, as information is in the eye of the beholder, it may sometimes be difficult to discern objectively if a system contains information.Integration

An integrated system means that the value of the information depends upon all of the parts present. If you can remove a part and it has no impact on the value of the information, then it would not be that integrated. On the other hand, if changing any small part reduces the information value drastically, then it would suggest that the parts are deeply integrated with one another. Measuring integration accurately is hard and time consuming, so IIT makes do with rough estimates based on a primitive part-removal technique.Maximality

Lastly, IIT says that a system is conscious if it is not actively part of a greater integration. If it is, then the greatest integrated system with the same characteristics would be considered the conscious entity, and not the parts themselves. It is conjectured that during sleep or anesthesia our brain gets fundamentally "split" to cut off the level of integration it usually has when it is awake. This may produce smaller units that themselves have a degree of consciousness (Phi > 0), but they are not conscious to the degree that a typical human brain would be - and thus would not feel conscious.

IIT is not without critics. Some say it is overly simplistic (yet measured in an overly-complicated way). Others point out that it's fundamentally flawed because it suggests that a large array of XOR gates would be conscious by its definition - yet intuitively we would say that is not the case.

Tononi addressed some of these concerns in later iterations of the theory. But in many ways these issues are resolved by another theory, which also deals with information integration but in a more specific way. What IIT succeeded in doing is drawing in the scientific community into the study of consciousness, and in particular viewing consciousness as a sub-discipline of information theory, which in itself has been a very fruitful endeavor.

Consciousness as Data Compression

A number of discussions have arisen over the past decade about whether the brain utilizes a form of data compression in order to store memories. We have learned a lot about data compression in information theory, because of its practical applications in communicating large amounts of information efficiently. Basically, data compression is the process of making data shorter without losing critical content. It often involves finding redundancy - parts of the source material that are deemed unnecessary and thus can be removed without affecting the overall meaning. It also involves finding patterns, which can then be referenced rather than repeated, to save space.

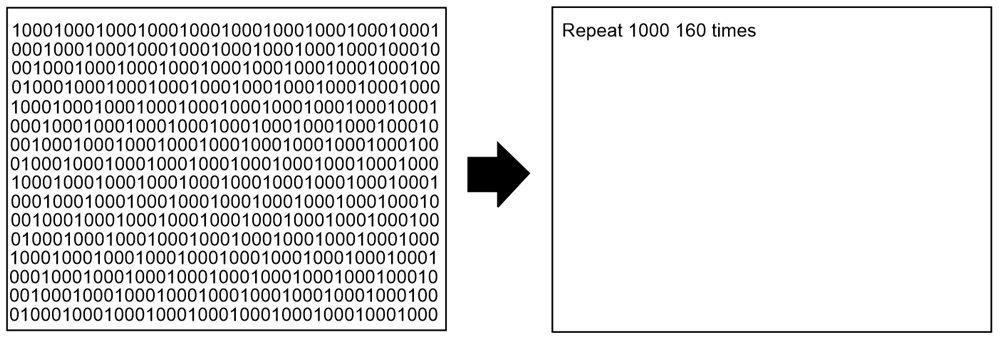

Something called "algorithmic data compression" goes even further than this. It is the creation of a list of commands (a program) that, when executed, would regenerate the original source material using techniques similar to this. For example, a command that says "repeat pattern P 1000 times", with a preceding command that defines pattern P. Such a program would be significantly shorter than the original source material (in this case the pattern P appearing 1,000 times). The analysis of the relationship between algorithms and information is called Algorithmic Information Theory, and this discipline has been surprisingly fruitful in understanding how the brain works.

In computer science, there are two forms of compression: lossy and lossless compression. Lossless compression seeks to store a high fidelity replica of the original source material in the most efficient way possible. It allows for no loss of data, which obviously makes it hard to reduce in size. Lossy compression, on the other hand, allows for only the critical aspects of the data to be retained. The remaining, redundant data is discarded. This is how the brain works: it picks out the bits of the sensory data that are important to remember, and replaces those with "pointers" to existing patterns stored in memory, along with anything novel about the experience that may be important in the future.

In the competitive environment of natural selection, it would make sense for the brain to use an optimal form of compression when creating memories, as the efficiency this would create would provide a survival advantage over those creatures without this capability. This alone is a good reason to believe that compression could be a central feature of how the brain works, and in particular how it stores memories. Recalling memories would then be a process of decompression.

But perhaps it goes deeper than that. Perhaps it touches on the most fundamental functioning of the brain: consciousness itself. The relationship between data compression and consciousness within a brain has been analyzed in depth in a paper by Phil Maguire called "Consciousness is Data Compression" (CIDC) (Maguire 2005). To quote,

"In this article we advance the conjecture that conscious awareness is equivalent to data compression. Algorithmic information theory supports the assertion that all forms of understanding are contingent on compression (Chaitin 2007). Here, we argue that the experience people refer to as consciousness is the particular form of understanding that the brain provides. We therefore propose that the degree of consciousness of a system can be measured in terms of the amount of data compression it carries out. "

The phenomenon of understanding, which the paper holds to be synonymous with conscious experience, then, is directly correlated to the process of data compression.

Many recent theories of consciousness appear to come to the same conclusion. For example, this technique of compressing source material into the shortest possible form is also a means that is used for measuring the complexity of data, a measure known as Kolmogorov complexity. The idea is that the longer the compressed representation that can recreate the source material, the more complex that source material is considered to be. It's rather interesting that another theory of consciousness has been proposed that quite literally equates consciousness with an increase in the Kolmogorov complexity of information within the brain (Ruffini 2017). But this really just reiterates precisely the same premise behind "Consciousness is Data Compression", as an increase in Kolmogorov complexity necessarily means that compression is occurring, memories are being generated, understanding is occurring, and this has been equated with conscious experience.

To quote Maguire from CIDC:

If an organism perceives a stimulus, yet can discern no pattern in the sensory data, then that stimulus will appear completely random and meaningless to the organism: the stimulus will not be experienced at all. On the other hand, if some redundancy can be identified, then the stimulus can be ‘understood’ (i.e. experienced) by relating it to previously gathered sensory information. For example, when people look at an apple, they perceive a round shape by identifying redundancy between the appearance of the apple and previously encountered round objects; they perceive a green color by identifying redundancy between the appearance of the apple and previously encountered green objects. When we ‘see’ an apple we are not just processing an instantaneous visual stimulus but, rather, compressing a set of data which has been gathered over a wide cross section of space and time. The structure of the brain allows a sensory stimulus to be translated into the subjective experience of understanding through the process of compression.

Such a mechanism is echoed in Ruffini 2021:

Conscious experience has a richer structure in agents that are better at identifying regularities in their I/Os streams, i.e. discovering and using more compressive models. In particular, a “more” conscious brain is one using and refining succinct models of coherent I/Os (e.g. auditory and visual streams originating from a common, coherent source, or data accounting for the combination of sensorimotor streams). We may refer to this compressive performance level as “conscious level.”

In other words, we can measure consciousness based on a system's ability to compress input data from its environment, from which it can make a determination about necessary attention - a process critical to its survival.

More recently there have been empirical studies done that confirm that the brain uses data compression as its mechanism to store data in memory (Planton 2021). Those experiments go into significant depth to uncover a kind of language the brain uses, where words represent concepts - much like the patterns that are recognized when compressing data. To quote the conclusion of that study:

Our study provides a first demonstration that, even after accounting for statistical transition probability learning, responses to sequence violations can be used to uncover the properties of the abstract mental language used by individuals to encode sequential patterns. The present proposal, which takes the form of a psychologically plausible formal language composed of a restricted set of simple rules (conforming to a simplicity principle and especially relying on the human ability to detect repetitions), proved to be more effective than alternative approaches in modeling the human memory for simple sequences. The observed relationship between sequence complexity and performance in the detection of violations is consistent with the idea that the brain acts as a compressor of incoming information that captures regularities and uses them to predict the remainder of the sequence.

There are several aspects to the process of finding patterns that correspond to certain aspects of conscious activity. Firstly, there is the first indication that a pattern might exist. This will take the form of noticing repeating phenomena, such as noticing a red hat. But this is measured against the association with the phenomenon against potential survival or reproductive benefit. In particular if the pattern is likely to be reused in the future. So if you just witnessed someone being hurt by a man in a red hat, and you see another person with a red hat, that becomes the beginning of a pattern.

And then there is detecting the limits of the pattern. For a pattern to have identity it must be delineated from other patterns. So, getting back to my example: you will naturally start to look for other phenomena that distinguish that characteristic from others. You look for other indications: angry looking men. Then you notice that the angry looking men are wearing red caps, and one other lady who looks scared is wearing a wooly red hat. This further refines the pattern you are forming.

This process of forming the pattern, broadly synonymous with the act of learning or memory-formation, is the critical aspect of compression that causes the experience of consciousness, and it therefore means these processes would be more likely to shape our experience. So in amendment to the theories of compression as consciousness, I would add that it is the construction of novel patterns specifically that constitute a conscious moment (learning), and not simply finding matches to existing patterns (understanding).

The previously mentioned theory, Integrated Information Theory, is also highly related to data compression. The process of compression involves a high degree of Phi, as per IIT's calculations. That's because it involves information, integration (as the patterns stored as critical to be able to decompress memories) and maximality (the entire system is required for maximum compressibility). This is likely why a common means of estimating IIT's Phi efficiently actually measures the Lempel-Zif compression of neural processes (Vermani 2019).

From these various studies and empirical data, it should be quite clear that data compression - information compression - is indeed closely related to conscious experience, and the degree of consciousness seems directly proportional to the amount of active data compression, and specifically pattern forming, that is occurring. The components of the brain, that Lockwood referred to as co-consciousness, are effectively the patterns that ultimately feed the compression. The successful orchestration of these in the act of data compression is what results in a conscious experience.

Consciousness Cut-Off

If we were simply to say that the act of information compression is a conscious event, then that would mean when I zip-up files on my computer, I am creating a conscious being, and then destroying it once it's complete. In fact compression is happening all the time in many ways - a single word is the compression of information, the concept it describes. Even a single strand of RNA is compressed information. For those ethicists who believe in absolute right of conscious beings, this may cause a serious moral dilemma. It would also give credence to beliefs such as panpsychism, the idea that everything in the universe is conscious to some level.

But there is good reason to believe that is not the case. We know what it is like to be conscious. You are likely (hopefully) conscious as you are reading this. But we also know what it's like to not be conscious. And we also know what it's like to be less conscious, perhaps when we wake in the middle of the night to use the toilet for instance. I have noticed, personally, that during the night when I wake into those states of semi-consciousness, my body reacts with a kind of aversion to any attempt to think of higher level, or more complex concepts. I imagine those higher level parts of my brain are getting their much-needed rest and don't particularly want to be disturbed. Yet somehow I can still function at a lower level without it.

The state of unconsciousness, when we are in a deep, dreamless sleep, is obviously not the same as being dead. Our body continues working, the heart beating, the kidneys filtering, the liver producing enzymes, the immune system fighting off pathogens and our body repairing itself. The brain is also still active at a lower level, entering into a certain pulsating rhythm of electrical activity. We do not know what actual processing the brain is capable of while we are unconscious, but there certainly is some form of processing occurring.

More to the point, the body is still acutely aware of its surroundings. The parts of the brain that can process sensory data are still very much active. For example, events we have tuned out, like a humming fan, are safely ignored by those modules in our brain. But others, for example the cry of a baby or an alarm, will wake us instantly.

This would mean, then, that awareness is not the same as consciousness. This was proven empirically in the Blindsight (Collins 2010) experiments. In these, a man with part of his brain removed discovered that it made him blind. However, he was strangely still able to maneuver around a room even though he could not see: He would move and unconsciously avoid obstacles, but have no awareness of that processing.

There are many other accounts of such unconscious processing. Another would be sleepwalking (technically called somnambulism) which can involve quite elaborate activity while still apparently unconscious, from walking around the house, practicing karate, preparing food, or even driving a car. Even when under general anesthesia (e.g. during surgery), patients with anesthesia-isolated forearms will respond to questions and move their arm, yet have no recollection of the event after the surgery (Panditt 2015) - or even of any time passing at all. As with sleepwalkers, communication from them is nonsensical. What is common among all these episodes is that, apparently, both memory and rationality are switched off - and that seems to be a signature of unconsciousness.

This appears to be a very similar phenomenon to what we call "zoning out" or "working on auto-pilot". One part of the brain that has been identified as being inactive during this unconscious behavior has been called the Default Mode Network (DMN), and has been the subject of several recent studies (Hamzelou 2017). These studies have shown that when the brain is learning, different areas including the DMN are activated. However, when the brain is merely applying that learned behavior, and working autonomously, then the Default Mode Network is barely activate - and this state is what we consider working autonomously, without higher-level conscious awareness.

All of this can inform us about what, subjectively, it means to be conscious compared to being unconscious. We are conscious when learning, creating new patterns, and thus building information. Yet absent this process, our brain can still function just at a different level. It can even appear to an observer to be acting perfectly normally. It can drive, perform functions that it has previously learned. Yet for it to process new information, to learn, then it switches into a conscious state.

So while we can say that the process of compression is directly related to consciousness, it must necessarily include the creation of new patterns, useful patterns, not simply of finding patterns it has previously met. It is not pattern matching, or even just pattern construction, but specifically novelty that becomes a conscious event.

Although this may not be the entire picture either. Hypnosis, for example, can involve learning new techniques while unconscious, yet they are still able to be recalled later - just without explanation, or even with a fabricated explanation. Arguably during hypnosis the patient is not taught novel information, just commands by association. But it certainly justifies further study. As do instances of apparent unconscious problem solving, something famously attributed to mathematician Carl Friedrich Gauss who claimed to have been working on a proof for two years when suddenly the solution popped into his head. He wrote "Finally, two days ago, I succeeded not on account of my painful efforts, but by the grace of God. Like a sudden flash of lightning, the riddle happened to be solved. I myself cannot say what was the concluding thread which connected what I previously knew with what made my success possible." In other words, Gauss was not conscious of his having solved the problem, it happened unconsciously. There are many other similar anecdotal stories: For example, Paul McCartney claims to have written Yesterday, one of the most popular songs in history, during his sleep - waking up humming the melody with no recollection of where, or how, it arose.

But perhaps this kind of creativity, even problem solving, is qualitatively different than the type of novel information integration that necessitates consciousness. Linking together pre-existing patterns in a creative manner is not necessarily the same as receiving new patterns extrinsically, and perhaps this holds the key to understanding what differentiates a conscious moment from an unconscious one: Being shut-off from external stimuli, or being unable to process and integrate that external stimuli.

It is worth pointing out that when we discuss these unconscious modes, we are talking about the behavior as occurring in a third party, not ourselves. This is because to ourselves we simply do not experience this unconscious behavior. It can occur in parallel with conscious attention such as mind wandering, or may not occur at all if we are sleeping or under anesthesia.

No doubt, studies will continue even though we seem to have made significant progress in our understanding.

Subjective Downward Causation

So now we have an idea of what might be meant by saying that some brain processes and external events may involve "more consciousness" than others, or even what it means for a process to be conscious or not conscious. Indeed we may even have a way to quantify this consciousness, to come up with a value - even though the precise inputs to that calculation are likely beyond reach. Nonetheless we can estimate whether one event may constitute greater conscious experience than another based upon certain broad characteristics that can tell us about the relative novelty and compressibility of the input data, for survival purposes.

Now we must consider the fact, as we have seen, that mental states (such as specific memories) can influence the degree of consciousness of future experiences. If existing mental states impact the way we might understand or learn from future events, then that means mental states must also impact how conscious of that event we would be. As we have learned from the discussion on Many Minds above, whether a brain would be more conscious of one event over another is critical to determining what we are more likely to experience, out of all the possibilities available (which may be infinite in number). This would mean that future events that we experience, out of all the possible events allowable by the universal wave function, may be determined (to some degree) by prior thoughts and experiences. In other words that our memory and thoughts may be the greatest influence on how reality unfolds for us. It is this phenomenon that is the basis for what could be considered mental causation, also known as downward causation because of its top-down nature.

While our selves, across the many worlds predicted by quantum physics, may be confronted with a vast number of possible circumstances, each of which have an entire bottom-up history, only certain of those will likely be experienced depending on which of those events involve the construction of more novel observations useful for our survival. This determination would certainly depend on the current contents of our memory, and thus on the state of our mind. In fact what predicates those experiences from a causal perspective would be determined by how the mind is primed, and not simply the efficient causes based on the state of the particles in the universe at that time. In other words, what we are describing could be considered a form of "Mind over matter".

Before we get too carried away with the idea of "mind over matter", I must point out that this phenomenon is significantly constrained. It's not simply that we can "think" anything we like into existence, as if we were in a Harry Potter movie, waving a magic wand and uttering some Latin phrase. The brain is finely tuned to tend to what is critical to its own survival, a set of constraints that are highly complex having developed over millions of years. But it may well mean that what we choose to learn that is relevant to survival could impact what it is we are likely to ultimately experience in the future.

This is clearly a radical conclusion to draw, and one perhaps quite inconsistent with how we have been taught to understand the world around us. Yet the mounting evidence both in terms of how consciousness functions and the nature of reality at a quantum level makes the possibility of this conclusion tantalizingly plausible.

Such a radical conclusion would naturally bring radical consequences, and we will discuss some of those at the end of this paper. One consequence is that, so it would follow, the more we are able to understand, the more we constrain the future according to the reasoning we hold. Memory formation is dependent, after all, on the flowing narrative that it constructs. Future memories, then, must be consistent with those of the past. And this means future events we experience will be shaped by what we do (or don't) recall.

It's worth noting that while we have been looking at this from the perspective of quantum physics, there have been other, entirely independent projects based upon algorithmic information theory and Kolmogorov complexity that have arrived at the same or similar conclusion from mathematics alone. One such theory, dubbed "Zero Worlds" or "Law without Law" (Mueller 2017), has looked at how mathematical models can be created from an observer's perspective based purely upon the highest likelihood of what would be observed next, using Solomonoff induction, which uses Kolmogorov complexity (and thus compressibility) to predict subsequent events. What is surprising is that from this simple top-down approach emerges many of the laws of physics that we assume arose bottom-up. This underscores the fact that ultimately what may be most fundamental in the universe is not some quantum particle field, but rather information itself, being driven by the process of survival and compressibility.

Causal Constraints

As we discussed in the previous section, it is important to stress that by no means does this phenomenon suggest that the human mind can simply think anything it desires into existence. Not only is the specific process of compression the brain utilizes complex and not fully understood, the mechanism is likely closely tied to the requirements the brain has to survive, and that involves some highly complex negotiations with the laws of physics and the entire history of the world around it, and filtering that determines what is and what isn't interesting to its survival. The core concepts or patterns related to our survival are likely imprinted from genetics that date millions of years, and experienced through instinct. And then as we explore the world around us, we compress input by referencing these firmly-ingrained core survival concepts, only creating additional concepts extending these patterns as absolutely needed.

And this is a good thing: the rigidity of the world around us, with all its laws and constraints, is - in fact - what allows this rich consciousness to exist in the first place. They are inseparably linked to one another, as a beautiful flower is to its roots hidden deep in the dirt. The entire history that led up to the creation of the human mind, governed by what may be called "natural law", is the richest source of information available to us.

So, unavoidably, the world still operates and evolves according to the laws of nature. Unsuspended bricks still fall down, it seems they cannot be made to suddenly float by thinking as such. Yet when we look closely at quantum physics, and the variations of particle positions that are possible in the wave function, it does suggest that it would be possible, just improbable, that some very surprising and unanticipated behavior could actually occur. (Indeed, when we speak of the probability of something occurring, what we are actually talking about is how conscious we would be of such an event occurring. In other words, how compressible it is. Ultimately, this is all that probability is (see Solomonoff's theory of inductive inference for more information)).

Take for example the phenomenon of quantum tunneling. A particle has a set number of positions we may think it could be in at any one time. In this superposition, as we have discussed, it actually exists in all of them at the same time. But what is interesting here is that some of them may be on the other side of a barrier. It's possible, just improbable. As such, this phenomenon allows particles to cross barriers they wouldn't ordinarily be thought capable of crossing. Fluids can leak from or enter into containers. Molecules and processes can be randomly disrupted. Nuclear fusion that fuels the Sun requires quantum tunneling. In fact, it has been hypothesized that the genetic mutations that cause cancer likely originate from a quantum tunneling event. involving protein disruption during meiosis. Maybe life itself arose through this same process (Jefferson 2022).

It seems that quantum tunneling, the ability for us to be conscious of particles in seemingly impossible (but actually just improbable) locations, could be at the root of many unexpected events in history. And it could allow for them in the future.

Theoretically it is possible for my entire body to suddenly move a foot to the left, because of the variation possible in every particle in my body. It is possible, just incredibly, maybe infinitesimally improbable. Yet in the Many Worlds interpretation of quantum physics, there is indeed a universe, a parallel timeline, where this must occur. So why don't we experience it? The answer, as we have discussed, is that as a seemingly unexplainable anomaly, it would be less useful as a pattern, so therefore less compressible, less understandable, thus we are less likely to be conscious of it occurring. And so, in general, we don't experience it.

Could we easily change our state of mind to be able to be conscious of such things? It would be unlikely because our brain has been intrinsically programmed to expect the world to work a certain way, for a certain stability and necessary predictability. This is essential for our survival, and any attempt to reprogram such a fundamental thing would surely jeopardize its very existence. And this effectively is what Markus Mueller (Mueller 2017) posited: do the laws of nature create us, or do we create the laws of nature because our nature is to observe predictability? Predictability may be "boring", but it is essential to the very stability we call survival that allows us to exist in the first place.

Ramifications and Further Discussion

Emergent Complexity

The most complex form we know to exist in the entire universe is the human brain. Life itself is extraordinarily complex, even though to us it often appears facile. How could it have arisen from such simple processes? This pressing question of abiogenesis has yet to be answered satisfactorily. But, as we have seen, there are mechanisms, such as quantum tunneling, that could allow for the possibility of complex life to arise (Jefferson 2022), given sufficient opportunity. Such occurrences would be unlikely, incredibly rare in fact, but still possible. But this is the key. In fact, if you consider that every possible particle interaction, as dictated by what may be an infinite wave function, actually takes place in its own parallel timeline, it would allow for an unfathomable number of developments to occur - and with it, timelines with unfathomable complexity. Including life.

Such complexity is necessarily "always there", and the only barrier to experiencing those would be the compatibility of the event with current conscious experience. Yet conventionally when we see complexity we look for micro or foundational causes, arising from the bottom upwards. This universal causation is naturally how we think about any event, because reason allows us to easily probe such causes through empirical methods and apply this for practical gain, in particular for survival. Indeed it is the cornerstone of the scientific method and reductionism in general. When those causes seem possible but highly unlikely, where does that leave us? Shrugging our shoulders and saying "it was just a fluke" ? When we look at the unfathomable complexity of various biological processes, things that seem far beyond our own intelligence to create, is "random fluke" really the best explanation we have? It could actually be that the vast improbability of such events is telling us something important about cause and effect, something that we have been missing all this time.

Some scientists have recently started to question whether we have cause-effect upside down (Ball 2022). To quote Philip Ball, in an article in New Scientist about this very turn of events in our understanding of causality:

The problem with the reductionist approach is apparent in many fields of science, but let’s take applied genetics. Time and again, gene variants associated with a particular disease or trait are hunted down, only to find that knocking that gene out of action makes no apparent difference. The common explanation is that the causal pathway from gene to trait is tangled, meandering among a whole web of many gene interactions.

The alternative explanation is that the real cause of the disease emerges only at a higher level. This idea is called causal emergence [or downward causation]. It defies the intuition behind reductionism, and the assumption that a cause can’t simply appear at one scale unless it is inherent in microcauses at finer scales.

The same, it should be noted, could also be said of cancer. For many years we have attempted to treat cancer by knocking out certain low-level mechanisms of how cancer cells function, by blocking specific cellular pathways, only to find a short while later that they have found an alternative route - often in ways that make you seriously ponder on whether the cancer is exhibiting intelligence. It appears that cancer gets its footing not necessarily in these low level processes, but rather at a higher level, which it maintains through a form of downward causation.

He goes on,

Causal emergence seems to also feature in the molecular workings of cells and whole organisms, and Hoel and Comolatti have an idea why. Think about a pair of heart muscle cells. They may differ in some details of which genes are active and which proteins they are producing more of at any instant, yet both remain secure in their identity as heart muscle cells – and it would be a problem if they didn’t. This insensitivity to the fine details makes large-scale outcomes less fragile, says Hoel. They aren’t contingent on the random “noise” that is ubiquitous in these complex systems, where, for example, protein concentrations may fluctuate wildly.

As organisms got more complex, Darwinian natural selection would therefore have favored more causal emergence – and this is exactly what Hoel and his Tufts colleague Michael Levin have found by analysing the protein interaction networks across the tree of life. Hoel and Comolatti think that by exploiting causal emergence, biological systems gain resilience not only against noise, but also against attacks. “If a biologist could figure out what to do with a [genetic or protein] wiring diagram, so could a virus,” says Hoel. Causal emergence makes the causes of behavior cryptic, hiding it from pathogens that can only latch onto molecules.

Could, then, downward causation help explain the vast (yet seemingly fragile) complexity of life and the universe around us? Could we say that in some sense the mind causes the brain, rather than the other way around? If the micro-causes seem possible but highly improbable, it would certainly seem plausible not only as an explanation, but maybe even a better explanation for how such complexity arose. The very existence of such improbable complexity could itself be supporting evidence that downward causation is at work. And perhaps looking at things from this perspective may in itself help pave new approaches to problems that have all but reached a dead end in biology.

On The Possibility of Unconscious Automata

We spoke earlier how some processes within our brain are less conscious than others. As we also discussed, there are likely many actions we could make that we wouldn't be conscious of at all. I think this is familiar to most of us as it is demonstrated by the phenomenon of sleep walking, of acting on "auto pilot", and unconscious actions while a patient is under anesthesia. We discussed this in detail in the previous section.

As we have seen, in the multiverse there are a vast number of behaviors that you perform that you will not be phenomenally conscious of. Yet the question remains: will anyone consciously see you make those actions? Could there be a conscious person in that same parallel timeline that observes your unconscious behavior? The answer appears to be that quite possibly they will. When observing another person, you will be conscious of the behavior you expect to see, or that is most understandable or conscious to you. However, when that doesn't match with what they themselves can be conscious of, because of their mental state, then you will be observing someone who is behaving as if they are conscious, but who actually are not necessarily conscious.

A person who is behaving as if they are conscious but who actually are not conscious is known in philosophy circles as a probabilistic zombie, or a behavioral zombie (They are similar in some ways to the p-zombies or philosophical zombies of David Chalmers, except they are predicated upon their behavior alone, not their particle-for-particle consistency as Chalmers would have it.). They are unconscious automaton, moving according to your subjective expectations without having the kind of conscious experience that comes with their own deeper thinking.

This phenomenon is also predicted by Markus Mueller's theory of Law without Law (Mueller 2017). In his example he looks at two observers - one called Alice and the other Bob. Both only experience what is probable for them, based on the compressibility of possible experiences. He then talks about the possibility of there arising a conflict between the observed behavior and probability of experience of each individual:

A priori, both probabilities can take different values. However, if they are in fact different, then we have a quite strange situation, reminiscent of Wittgenstein’s philosophical concept of a “zombie”: Bob would in fact not observe what Alice sees Bob observe, but would divert into his own “parallel world” with high probability. [This would] mean that Alice is confronted with some sort of “very unlikely instance” of Bob, and that the Bob that she knew earlier has somehow subjectively “fallen out of [her] universe”.

What would it feel like to be such a "zombie"? It would be impossible to know for sure, but we could hypothesize that it would be similar to how one feels when one acts on "auto pilot". For example, performing actions when not thinking (for instance, driving or riding a bicycle through habit, or playing the piano). Typically exhibiting a primitive awareness, but lacking higher-level processing and memory formation, what we have come to see as processing that results in information compression. Acting with a kind of confusion or lack of understanding.

Empirical studies have been done that demonstrate our ability to act seemingly intentionally, but entirely unconsciously, known as the "Blindsight" experiments (Collins 2021). Such experiments prove there is such a phenomenon as acting unconsciously. The precise degree to which this happens, and whether it is the "default" mode of existence for non-human, more primitive animals, is an area that remains to be studied. Yet it is encouraging that there could be some empirical evidence for these unconscious-but-behaviorally-indistinct probabilistic zombies that our theory predicts. And if not beings, at the very least moments of such unconscious autonomy.

Subjective Immortality

Within the constant branching of the universe, with every possibility playing out, there is likely a timeline that occurs with certain frequency where your life ends. In fact, according to the Schrodinger equation, our body must deteriorate constantly in multiple branches. Do we experience that, in that particular timeline, say every few seconds?

This raises an important question: is it even possible to experience your existence stopping? Certainly, if (as we have theorized) you are more likely to find yourself (be fully conscious) in timelines where there is greater conscious experience, it would seem unlikely (if not impossible) to experience a timeline where there is no conscious experience at all. It is even unlikely you would find yourself in a timeline where there is less conscious experience. That would mean that, subjectively, you will probably always find yourself in a timeline where you have averted a situation that involves you ceasing to be conscious.

How this averting death scenarios occurs will depend on what is actually physically possible. It may be that you are in a bad car accident, you fall unconscious but then wake up to recovering from surgery that saved your life. In some timelines the surgery was not possible, and you died, but you would not experience those. Depending on the circumstances and what is possible, you may find yourself in extremely improbable scenarios - "miracle cures", and events so outlandish that you may even find yourself putting them down to divine intervention.

This phenomenon has been discussed at length by theoretical physicists and philosophers who have dubbed it "Quantum Immortality". However, in light of the understanding of consciousness described in this paper, it would seem to have been given a new life.

It gained significant traction with Max Tegmark, who in his book "Our Mathematical Universe" (Tegmark 2014) posed a thought experiment related to this effect of consciousness subjectively continuing through multiple available timelines where it is able to continue, even though in one of them you may die. He addresses the topic in Chapter 8, proposing an experiment where you toss a coin and if it comes out heads then something kills you:

"What if the... multiverse is real? Then there would be infinitely many parallel universes to start with that contained you in subjectively indistinguishable mental states, but with imperceptibly slight differences in the initial position and velocity of the coin. After one second, you'd be dead in half of those universes, but no matter how many times the experiment is repeated, there would always be a universe where you never get shot. In other words, this sort of macabre randomized-suicide experiment can reveal the existence of...parallel universes more generally."

If immortality is guaranteed, what, then, about old age? Surely at some point you will die of old age? Could there be possibilities where you evade even eventual degradation of your cells, and your brain? Such conjecture is far too academic to provide any serious response, but nonetheless we can speculate. Firstly, one would presume that actual consciousness ceases before your body actually dies. As we have discussed, consciousness is a complex function that requires a high degree of functioning, and even minute disruptions in that information integration can cause consciousness to cease (even though the person may appear to be conscious). Secondly, the aging of cells is likely related to a process called quantum tunneling, which is a phenomenon that occurs only with a certain probability - meaning there would be timelines where it would not occur.

So, the moment before your brain finally goes into an unconscious state from old age - would it not be possible that you find yourself in a timeline where this aging stops, or even reverses? Or perhaps you receive a just-invented medicine that reverses aging, extending telomeres and repairing broken cells? At the moment it seems impossible, but it is not ruled out. Again, if there was a timeline where this is possible - and surely it would be possible if aging itself is a quantum effect - then naturally the likely scenario would be for that timeline to be experienced, and not death by old age where we fall unconscious, or at least less conscious.

So, you may ask, why don't you see people who are 1,000 years old around you now?

Firstly, we must keep in mind that our bodies are actually billions of years old already - our DNA stretches back very far, and maybe even much further than we realize. Yet consciousness only requires a memory that goes back, say, 15 years with clarity - and the further back, the vaguer it gets and thus the more easily those memories can be replaced with explanations that allow you to live longer and longer.

Secondly, it may simply be that at our age it is unnecessary to have people in our lives that live much further than our own age, it would be superfluous to current survival needs. The "most compressible" rule would dictate that we don't experience phenomena that are beyond what is necessary for survival. In fact, the existence of extremely old people may make you feel more resilient than you actually are, and thus cause you to take higher risks that threaten your survival. But as time goes on, you'll likely find more outlandish circumstances arise that allow you to live longer, and then you may well start to see others live a similar amount of time. Over a lengthy amount of time, the requirement for the continuation of consciousness could result in the selection of truly miraculous-seeming solutions for longevity.

Coincidences and Carl Jung's Synchronicities

The theory promoted in this paper is not purely academic, it also makes certain predictions. The predictions may explain not only why there exists such a degree of unexpected [*] complexity around us, but also why there arise certain coincidences (also called synchronicities) in everyday life.

I'm sure, like everyone, the reader has experienced such coincidences. Even though we quickly forget their significance, simply because as we learn about their bottom-up causation it just becomes another every-day event and explainable. And perhaps this is a good reason to actually keep a record of such events, so they can be analyzed more objectively after the fact. Just to add some anecdotes to this section, I will recall a few of my own.

Some of the examples of coincidences may seem frivolous: a friend in school once told me that the number 147 shows up everywhere. Since that day it truly has just shown up everywhere. I frequently look at the clock and it would say 1:47pm. I look at the stock ticker and it will show that it's up 1.47. I look at text messages and see the last one I sent was at 1:47. I go to the store and everything is on sale at x1.47. Is it just an unexplainable coincidence, or am I just remembering the incidents where I see the number 147?

Others are more difficult to explain: I was reading about a physicist working at Los Alamos one evening before I slept (a lab I barely knew about), then the next morning I literally get a call from Los Alamos National Laboratory asking about a product we sell, the first time I have ever heard from them (or any laboratory).

Other times I may just be thinking of something, or reading something, and then hear of something related, even though it's an entirely different medium. For instance, just last week I read the words "late stage capitalism" in an article, a term I hadn't really heard before, and then seconds later I got an email from twitter where one of the tweets (at the top of the list) read "This isn't late stage capitalism.". Another time I got home from the shops with a new lamp, and the TV was on in the background. My wife asked: what kind of bulb does it take? And the person on TV, on cue, said "That would be a 40 watt bulb", just making an analogy and talking about something entirely different. Even right now, I take a 30 second break from writing and switch to read a (political) news article that, right in the middle, starts talking about the phenomenon of strange coincidences and Carl Jung! (completely unrelated to the topic of the article, which was far more pedestrian).

Carl Jung, a Swiss analytical psychologist born in 1875, took coincidences very seriously, and spent much of his life trying to find an explanation for this ubiquitous phenomenon. He coined the term "synchronicity" to describe it, and wrote at length on the topic. He pitted synchronicity against the more conventional universal causation, the bottom-up micro-causes we're familiar with. Other than being good friends with Albert Einstein, he also worked at length with quantum theory pioneer Wolfgang Pauli, and they collaborated on several papers trying to understand synchronicity from a quantum theory perspective. Together they proposed what was called the Pauli–Jung conjecture.

While this conjecture mused that certain characteristics of quantum theory such as its non-locality and entanglement may provide a sound basis for synchronicity, it never went far beyond such speculation. I would propose that the theory explicated by Lockwood and expanded upon in this paper would significantly expand upon and cement those intuitions held by both Jung and Pauli. The link between coincidences and conscious experience is indeed quantum entanglement. These "synchronicities" are subtle echoes of the downward causation that is literally how the mind, and the universe with it, functions. It is the perpetual construction and compression of relevant information, out of infinite noise - even if that information is sometimes mundane topics like light bulbs, or the number 147.

The process of compression must necessarily reuse concepts in order to store new data in the most efficient way. When you have a particular concept instilled in your mind, because (as I have explained) the act of compression carries with it more consciousness, and we are more likely to find ourselves in timelines where there is more consciousness, the re-using of these concepts in novel ways is thus more likely to be experienced. It is this re-using or development of concepts that we call coincidences or Jung calls synchronicities, and that many of us can testify to experiencing frequently.

The concepts are not always as simple as objects or themes. They may also be other patterns of experience or associations that exist in our neural configurations that we can discern less readily. For example not something as specific as a kettle, but rather the broader concept of cooking, eating, or even satisfaction in general. One can imagine that a more in depth analysis of metaphor in general may provide more insight into the nature of these patterns.

It has been reported by psychologists that people are more likely to report coincidences during times of emotional stress. During such tumult our brains are perhaps tasked with finding changes in circumstances, an "escape hatch" so to speak. During this time we perhaps rely less on those deeply ingrained patterns and instead seek to form new memories using patterns it has learned more recently. As a consequence, the emphasis on current mind states rather than longer-term memories could increase the likelihood of experiencing coincidences, and also affect our ability to test the phenomenon empirically - which we'll get to next.